Data Security Compliance: Evidence Auditors Expect to See

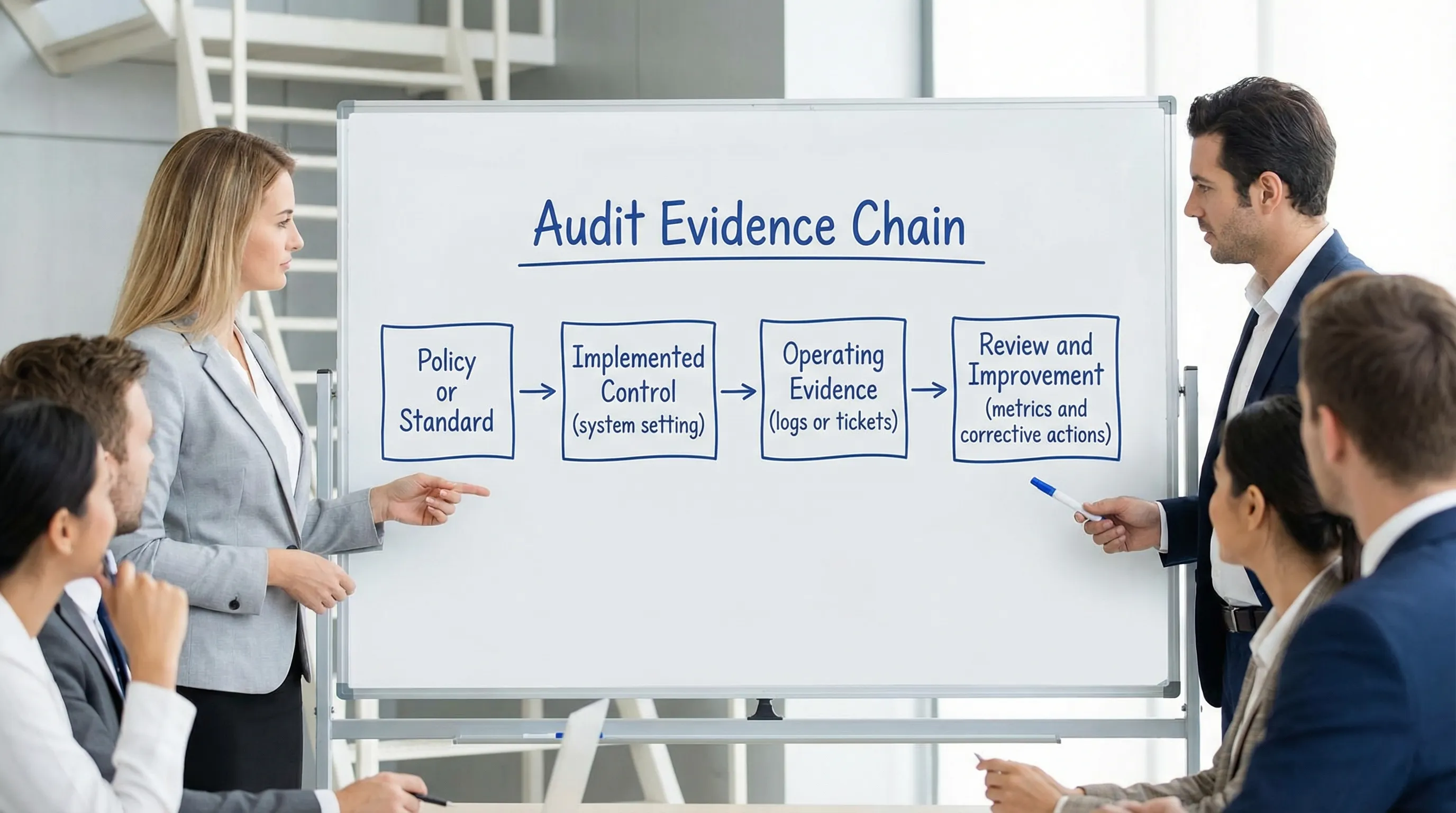

Auditors rarely fail an organisation because it lacks policies. They fail organisations because the evidence does not show the controls are operating consistently, across real systems, for real people, over a defined period.

If you are preparing for a data protection review, a customer security questionnaire, an ISO-aligned internal audit, or a regulator-facing assurance exercise, this guide breaks down what “data security compliance” evidence typically looks like in practice, and how to package it so an auditor can verify it quickly.

What counts as “evidence” in data security compliance?

In an audit, “evidence” is anything that demonstrates:

Design: the control exists and is appropriate (policy, standard, procedure, configuration baseline).

Operation: the control is actually used (logs, tickets, approvals, reports, training records).

Effectiveness: the control works (test results, restore tests, vulnerability remediation trends, incident post-mortems).

Auditors also care about basic evidence qualities:

Traceable: clearly tied to a specific control requirement.

Time-bound: shows a period (for example, last quarter, last 12 months).

Complete: not a single screenshot with no context, but enough supporting material to validate.

Tamper-resistant: comes from authoritative sources (system logs, HR records, ticketing systems) with clear ownership.

In Jamaica, the Data Protection Act requires organisations to protect personal data with appropriate security and governance. Even when the audit is not labelled “Data Protection Act audit”, most assurance requests still converge on similar security proof points (access control, encryption, monitoring, supplier management, and incident readiness).

The evidence auditors expect (by control area)

The most efficient way to prepare is to build an evidence pack grouped by control domains. Below are the domains auditors commonly test, and what “good evidence” looks like.

1) Governance and accountability

Auditors look for proof that security and privacy are managed, not improvised.

Typical evidence:

Information security and data protection policy suite (approved versions, review dates, owners).

Roles and responsibilities (for example, who approves access, who manages incidents, who owns vendors).

Governance artefacts such as risk committee minutes, GRC meeting notes, or management review outputs.

Risk register entries showing data security risks, ratings, treatment plans, and due dates.

Common audit finding: policies exist but are out of date, not approved, or not mapped to operations.

2) Data inventory, classification, and “what data lives where”

A strong security posture starts with clarity on what personal data you hold, where it flows, and who touches it.

Typical evidence:

Data inventory (systems, data types, owners, retention, legal/processing purpose).

Data flow diagrams for key processes (customer onboarding, payroll, marketing, support).

Data classification standard and examples of classification applied (labels, handling rules).

High-risk assessments where relevant (for example, documented assessments for sensitive processing, new platforms, or major changes).

If you already have a broader privacy compliance programme, you can align this section with your operational mapping work. (PLMC has also published practical guidance you can use as a baseline, such as this Data Protection Jamaica: Compliance Roadmap for 2026.)

3) Access control and identity management (IAM)

This is one of the most evidence-heavy areas, and one of the easiest to fail if the organisation relies on informal practices.

Typical evidence:

Joiner, mover, leaver workflow evidence (HR trigger, approvals, provisioning, deprovisioning).

MFA enforcement proof for key systems (identity provider settings, admin screenshots, conditional access rules).

Privileged access management approach (named admin accounts, break-glass process, least privilege model).

Periodic access reviews (who performed them, results, remediation actions).

A small sample of access requests and approvals from the last period.

Common audit finding: access is granted promptly, but not reviewed, and leavers retain access.

4) Technical security controls (patching, configuration, encryption, endpoints)

Auditors want to see both a standard and the operational trail.

Typical evidence:

Secure configuration standards (baseline hardening references, approved exceptions).

Patch management policy plus patch compliance reports (for servers, endpoints, network devices).

Vulnerability management outputs (scan results, prioritisation, remediation tickets, closure proof).

Endpoint protection evidence (deployment coverage, update status, alert handling procedures).

Encryption evidence where applicable (device encryption enforcement, database encryption approach, key management ownership).

Where useful, you can map your evidence to recognised frameworks such as the NIST Cybersecurity Framework to show completeness and consistency.

5) Logging, monitoring, and alert response

Auditors increasingly test whether an organisation can detect suspicious activity, not just prevent it.

Typical evidence:

Central logging coverage statement: which systems log, what events are captured, and retention periods.

Samples of security event reviews (weekly checks, SOC dashboards, alert triage tickets).

Evidence of time synchronisation approach (so logs can be correlated).

Access logs for privileged activity (admin actions, configuration changes) where possible.

Common audit finding: logs exist but are not reviewed, not retained long enough, or not tied to a response process.

6) Incident response and breach readiness

Even with strong controls, incidents happen. Auditors look for readiness and learning.

Typical evidence:

Incident response policy and playbooks (including decision-making and escalation).

Incident register with severity ratings and closure actions.

Tabletop exercise reports (what was tested, gaps, remediation owners).

Post-incident reviews showing root cause and control improvements.

From a data protection perspective, readiness should also connect to how you identify and manage incidents involving personal data.

7) Backup, recovery, and resilience

Backups are not compliance evidence unless you can prove they work.

Typical evidence:

Backup policy with scope and frequency.

Backup job success reports.

Restore test evidence (test plan, test results, screenshots/log extracts, sign-off).

RTO/RPO expectations for critical systems, and evidence that they are realistic.

Common audit finding: backups run, but restore is untested or fails in practice.

8) Supplier and cloud risk management

If vendors process personal data or host critical systems, auditors will test your oversight.

Typical evidence:

Vendor inventory with criticality, data types, and contract owners.

Due diligence records (security questionnaires, risk ratings, remediation follow-up).

Contractual controls (data protection clauses, confidentiality, breach notification, subcontractor rules).

Independent assurance evidence where available (for example, ISO 27001 certificates or SOC reports supplied by the vendor).

Auditors also like to see a clear statement of the shared responsibility model for major cloud platforms (what the provider secures vs what you must configure and monitor).

9) Secure change management and SDLC controls

This is especially important for organisations that build software, integrate systems, or frequently change configurations.

Typical evidence:

Change management policy and approval workflow.

Sample change tickets (request, risk/impact assessment, approvals, implementation evidence, rollback plan).

Evidence of code review or peer review where applicable.

Security testing approach (for example, dependency management, basic application testing reports where used).

10) Training, awareness, and culture

Auditors are not just checking that training exists. They check whether it is targeted, repeated, and measured.

Typical evidence:

Training programme (who must take what, how often).

Completion records and reminders/escalations for non-completion.

Role-based training for higher-risk teams (IT admins, HR, customer service, compliance).

Evidence of awareness activities (posters, internal newsletters, phishing simulations if used).

11) Physical security (often forgotten)

Even highly digital organisations can fail basic physical controls.

Typical evidence:

Visitor log process and badge controls.

Server room access lists and periodic review.

Asset handling procedures for laptops and removable media.

A practical “evidence pack” table you can use

Use a table like the one below as a starting point for your audit folder structure.

Control area | Evidence examples | What auditors test | Frequent gap |

Governance | Policies, approvals, minutes, risk register | Ownership and oversight | Policies not reviewed or not adopted |

Data inventory | System list, data flows, owners, retention | You know where personal data is | Inventory incomplete or outdated |

IAM | MFA proof, access approvals, leaver controls, access reviews | Least privilege and lifecycle management | Leavers not removed, no review trail |

Vulnerability mgmt | Scan results, remediation tickets, patch reports | Timely risk reduction | Findings sit open too long |

Logging/monitoring | Log coverage, alert tickets, review records | Detect and respond capability | Logs kept but not reviewed |

Incident readiness | IR plan, incident register, tabletop results | Containment, escalation, learning | No exercises, weak documentation |

Backup/restore | Backup reports, restore test evidence | Recoverability | No restore tests |

Vendors/cloud | Due diligence, contracts, assurance reports | Third-party governance | Contracts missing key clauses |

Training | Completion logs, role-based training | Competence and awareness | One-off training, no metrics |

How to make your evidence “audit-ready” (so it passes a sceptical review)

A common mistake is collecting documents the week before the audit. A better approach is to build a small, repeatable system.

Create an evidence register (not just a folder)

An evidence register is a simple spreadsheet that lists:

Control name

Evidence item

Source system (HR, M365, identity provider, ticketing tool)

Owner

Frequency (monthly, quarterly)

Location (link)

This reduces last-minute scrambling and demonstrates maturity.

Show sampling and continuity

If you claim “access is approved”, include 5 to 10 sampled tickets from the audit period. If you claim “alerts are reviewed weekly”, include 4 to 8 weeks of review evidence.

Preserve integrity and version control

Auditors will trust evidence more when it comes from:

Exported system reports (date stamped)

Ticketing systems with immutable histories

Version-controlled documents (with review and approval trails)

This matters even more as organisations adopt generative AI. If staff use AI to draft incident summaries, policies, or audit responses, ensure you have a clear internal rule on attribution and review. Resources like Detection Drama’s AI detection and humanisation overview can help compliance teams understand how easily text can be altered, which is exactly why your audit evidence should rely on system records and approvals, not narrative statements alone.

What auditors often ask in interviews (and how to prepare)

Expect interviews with IT, HR, operations, and compliance. Auditors often test whether teams can explain the control without reading the policy.

Prepare your control owners to answer:

“Show me how a new employee gets access, and how you remove it when they leave.”

“Who reviews privileged access, and where is the last review documented?”

“How do you know backups are recoverable? Show the last restore test.”

“When was the last security incident, and what changed afterwards?”

“Which vendors process personal data, and how do you assess them?”

If the answers rely on “we usually do it”, you have a documentation gap.

Fast self-check: are you ready for a data security compliance audit?

You are closer to audit-ready when you can produce, within 24 to 48 hours:

A current system and data inventory

The last quarter of access approvals and access reviews

Patch and vulnerability remediation evidence

Restore test results

Incident response exercise outcomes

A vendor list with due diligence and contracts

Training completion metrics

If any of these take a week to assemble, that is a signal to build an evidence register and a recurring collection routine.

Frequently Asked Questions

Is a written security policy enough to prove data security compliance? A policy is only design evidence. Auditors typically require operating evidence too, such as access reviews, patch reports, incident tickets, and restore test results.

How far back do auditors usually look for evidence? It depends on scope, but many audits sample the last 3 to 12 months. For some controls (like access reviews), auditors may expect a quarterly trail.

What is the single most common audit failure for security controls? Lack of proof that controls operate consistently, for example, MFA is “required” but not enforced everywhere, or backups run but restores are never tested.

Do small Jamaican organisations need the same level of evidence as large enterprises? The evidence can be scaled, but the principles remain the same: know your data, control access, protect systems, manage vendors, train staff, and document what you do.

How should we store audit evidence? Use a central, access-controlled repository with clear naming conventions and retention rules, and rely on system exports and ticketing records whenever possible.

Need help assembling an audit-ready evidence pack in Jamaica?

Privacy & Legal Management Consultants Ltd. (PLMC) supports organisations in Jamaica with data protection implementation, cyber security services, GRC integration, training sessions, and practical risk assessment tools.

If you are preparing for an audit or want to strengthen your documentation and operating evidence, you can start with a free consultation via Privacy & Legal Management Consultants Ltd..